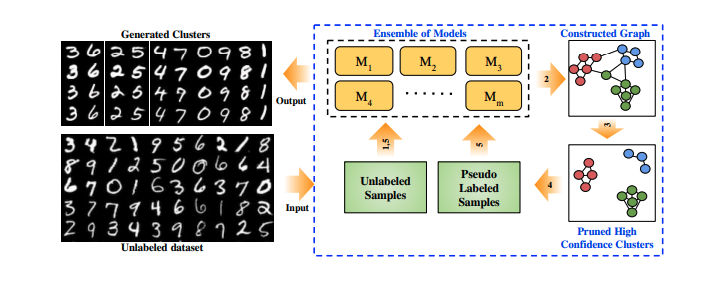

The similarity used is the cosine similarity. The learning procedure is about maximizing similarity between the anchor and the positive sample using NT-Xent loss from SimCLR. There is no explicitly defined negative views, but instead the procedure considers the 2(N - 1) other examples in the batch as negative views.Īn network f, the encoder, learns a representation of the anchor and positive view that is then fed to a projection head gthat is not used to generate the embeddings. The corruption is made by simply replacing feature's values by the one observed in another sample that is drawn randomly. This model builds embeddings of tabular data in a self-supervised fashion similarly to SimCLR using a contrastive approach.įor each sample (anchor) in a batch of size N, a positive view is synthetically built by corrupting from the anchor a fixed amount of features drawn randomly each time, hence giving a final batch of size 2N. These beginner machine learning projects consist of dealing with structured, tabular data. Self- and semi-supervised learning frameworks have made significant progress in training machine learning models with limited labeled data in image and.

To bridge the gap between the difficulty of DNNs to handle tabular data and leverage the flexibility of deep learning under input heterogeneity, we propose DeepTLF, a. pretraining_head(nn.Module): pre-training head network. Although deep neural networks (DNNs) constitute the state of the art in many tasks based on visual, audio, or text data, their performance on heterogeneous, tabular data is typically inferior to that of decision tree ensembles.corruption_rate (float): fraction of features to corrupt.head_depth (int): number of layers in the pre-training head.In this way, a vast number of training instances with supervision can be generated from the unlabeled data to train a model for the pretext task. encoder_depth (int): number of layers in the encoder MLP. Self-supervised learning leverages a carefully defined pretext task for supervised feature learning where the supervision is automatically generated from the data itself.emb_dim (int): dimension of the embedding vector.# compute loss loss = ntxent_loss( emb, emb_corrupted)įor more details, refer to the example notebook example/example.ipynb and how to supply samples to the model in example/dataset.py Parameters # get embeddings emb, emb_corrupted = model( anchor, positive) parameters(), lr = 0.001)Īnchor, positive = anchor. The authors propose a self- and semi-supervised deep learning framework for tabular data that trains an encoder in a self-supervised fashion by using two. Train_loader = DataLoader( train_ds, batch_size = batch_size, shuffle = True) An excerpt from the article by Yann LeCun and Ishan Mishra from Meta will serve as a good introduction here: > Supervised learning is a bottleneck for building more intelligent generalist models that can do multiple tasks and acquire new skills without massive amounts of labeled data. data and a pseudo-labeling framework for tabular data. # train the model batch_size = 128 epochs = 5000 device = torch. learning, self-supervised learning, and unsupervised learning, have been proposed. model import SCARF # preprocess your data and create your pytorch dataset # train_ds =.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed